Key Takeaways

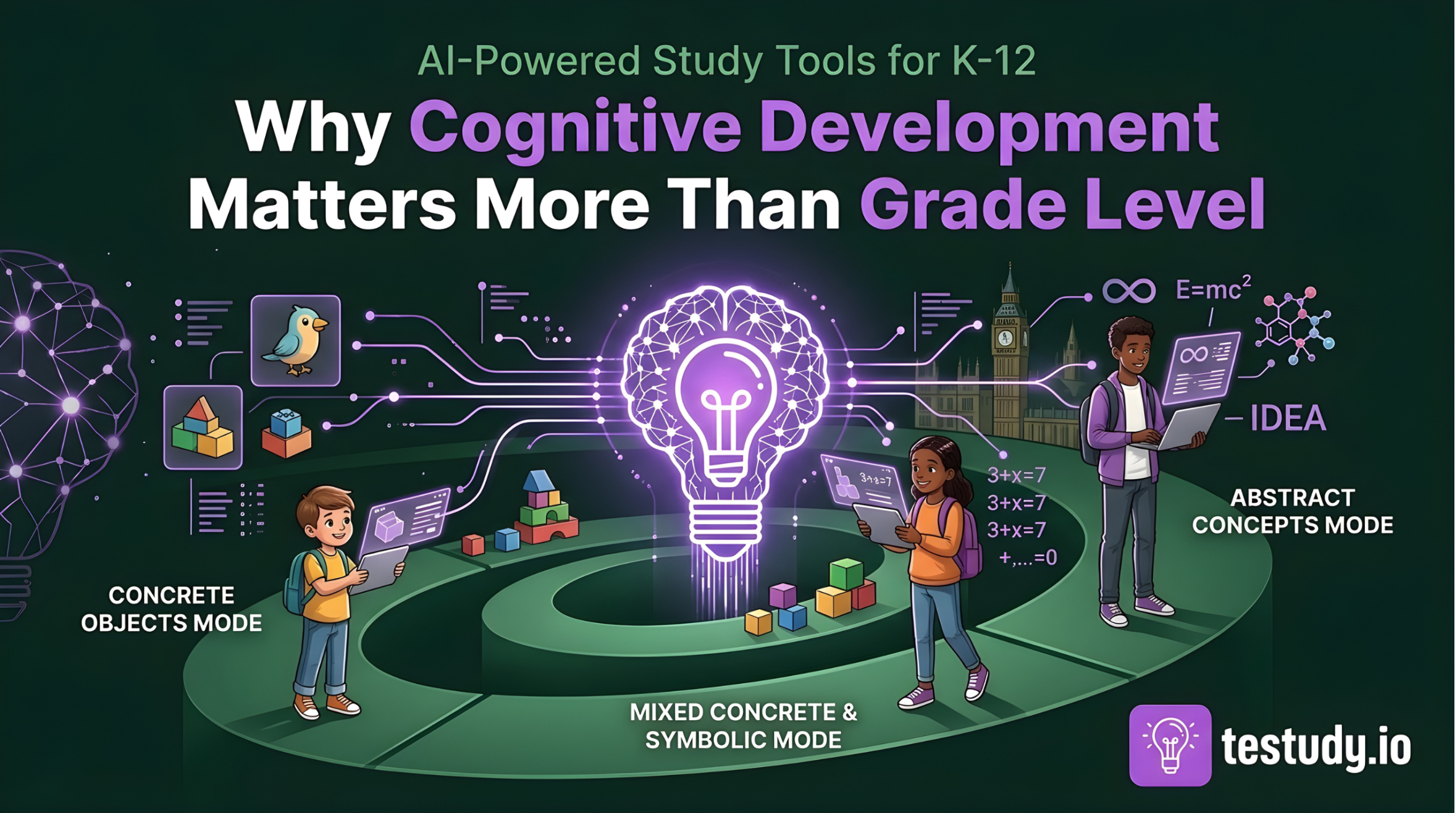

- AI for K-12 must adapt the cognitive mode of content (concrete vs. abstract), not just the difficulty level or vocabulary.

- Active recall and spaced repetition algorithms must be modified for shorter attention spans and different memory patterns in younger learners.

- Dashboards should report on cognitive engagement (e.g., scaffold usage, mode preference) rather than just scores.

- Privacy and ethical limits are non-negotiable; AI is a support tool, not a diagnostic replacement for human experts.

- The goal is to build a scaffold from a child’s current cognitive reality to the next level, not to force adult-like thinking.

Introduction

The EdTech market is flooded with AI study tools promising personalized learning for every student. But a critical flaw undermines most offerings for the K-12 market: they treat cognitive development as a matter of reading level or grade alignment, not as a fundamental shift in how a child thinks. An AI that successfully generates multiple-choice questions for a medical student will fail, and potentially frustrate, a second grader, even if the vocabulary is simplified. The difference isn’t just knowledge; it’s the architecture of thought. This article argues that effective AI-powered study tools for K-12 must be explicitly designed around Piaget’s stages of cognitive development. The goal is not to create ‘easier’ content, but to present complex knowledge in modes that align with a child’s current capacity for concrete operation, logical reasoning, or abstract hypothesis. When AI respects these developmental realities, it becomes a scaffold for cognitive growth. When it ignores them, it becomes just another source of cognitive overload.

The Critical Mistake: Scaling Down Adult AI Without Rethinking Cognition

The typical approach to K-12 AI tools is reductionist: take the adult algorithm, lower the Lexile score, add some cartoon graphics. This is insufficient. A 10-year-old in Piaget’s Concrete Operational stage can think logically about objects and events they can directly perceive, but struggles with abstract, hypothetical reasoning. An AI generating a question like ‘What would happen to the economy if all bees disappeared?’ is asking a Formal Operational thinker to engage in systematic hypothetical-deductive reasoning. For a Concrete Operational child, this question is cognitively meaningless, no matter how simply it’s worded. The confusion and anxiety this mismatch creates is a primary reason why many students reject ‘smart’ learning tools. The tool feels alien, not adaptive. The first step in building a real K-12 solution is to abandon the metaphor of ‘scaling down’ and adopt the metaphor of ‘translating’ content into the appropriate cognitive modality.

The Piagetian Framework: A Map for Developmentally Appropriate AI

Jean Piaget’s theory of cognitive development provides the essential map. We must understand the four key stages relevant to schooling:

- Preoperational (2-7 years): Intuitive, ego-centric thought. Symbolic play develops, but logic is absent. Learning is through sensory motor interaction and vivid imagery. AI tools here must be highly visual, interactive, and tied to immediate perception. Questions must be about ‘here and now’ or familiar stories.

- Concrete Operational (7-11 years): Logical thought emerges, but only for concrete, physical objects and events. Conservation, classification, and seriation are possible. Abstract symbols (like algebraic variables) are difficult. AI must present problems with tangible, manipulable elements. A math problem about ‘apples’ is concrete; using ‘x’ is abstract. The tool should allow virtual manipulation of objects.

- Formal Operational (11+ years): Abstract, hypothetical, and systematic reasoning develops. Adolescents can think about possibilities, formulate hypotheses, and test them mentally. This is when traditional academic subjects (algebra, scientific theory, philosophical debate) become accessible. AI can now introduce abstract symbols, counterfactual scenarios, and multi-variable problems.

A common point of confusion is that stages are rigid. They are not. They are broad, overlapping tendencies. An AI tool’s job is not to ‘diagnose’ a stage, but to offer content and interaction modes appropriate for the range of a typical classroom and allow the student to gradually operate in the next stage with support (scaffolding).

From Theory to Algorithm: Adapting Core Study Techniques

How do we translate these stages into the core mechanics of a study tool?

Active Recall: For a Preoperational learner, recall is not verbal. It’s pointing, matching, or acting out. An AI must generate image-based or action-based prompts. For a Concrete Operational learner, recall questions must reference specific, tangible examples from the lesson (‘What did the character do with the red ball?’). Abstract definition recall (‘Define osmosis’) is inappropriate. For Formal Operational learners, recall can target principles and abstract concepts.

Spaced Repetition: The spacing interval algorithm cannot be one-size-fits-all. Working memory capacity and attention span differ dramatically. A 7-year-old may need a review after 4 hours, not 1 day. The ‘decay’ curve is steeper for concrete facts in younger learners. Furthermore, the type of review item must change. A review for a 9-year-old on a science topic might be a concrete, image-based classification task. A review for a 14-year-old on the same topic might be a short-answer explanation of the underlying principle.

Question Generation: This is the most visible adaptation. For Concrete Operational students, AI must generate questions anchored in specific, non-hypothetical scenarios. Compare: ‘Why did the character feel sad?’ (concrete, based on story events) vs. ‘How might the story change if the setting were different?’ (hypothetical, formal operational). The AI’s comprehension engine must not just extract facts, but tag them by concreteness and assign them to the appropriate question-generation template.

Beyond the Question: Dashboard Design and Interventional Cues for Adults

The parent or teacher dashboard is where trust is built or broken. If it only shows ‘70% correct on quizzes,’ it provides no developmental insight. A trustworthy dashboard must surface metrics that reflect cognitive engagement, not just accuracy.

- Cognitive Load Indicators: Did the student repeatedly abandon questions tagged as ‘abstract’? This signals a potential mismatch, not just a knowledge gap.

- Scaffold Usage: Is the student consistently using the ‘concrete hint’ feature? This shows they are operating at the edge of their capacity, a positive sign of learning, not a weakness.

- Mode Preference: Does a 10-year-old consistently choose ‘video example’ over ‘text explanation’? This is valuable data about their current cognitive preference.

The dashboard should guide intervention. Instead of ‘John is failing chemistry,’ it should say, ‘John struggles with abstract mole-concept problems but excels at concrete lab procedure questions. Recommend more hands-on virtual lab activities before reintroducing the symbolic equations.’ This reframes the problem from student failure to tool/instructional mismatch, which is profoundly anxiety-reducing for the adult and empowering for the student.

Non-Negotiables: Privacy, Ethics, and the Limits of AI

For K-12 tools, privacy is not a feature; it is the foundation. Compliance with COPPA (US) and GDPR-K (EU) is table stakes. Data collection must be minimized. Behavioral data used to infer cognitive preferences must be anonymized and aggregated at the classroom level for model improvement, never sold.

More importantly, we must state the limits of AI clearly. An AI tool cannot diagnose a learning disability. It cannot replace a trained educator’s observation of a child’s reasoning process during a hands-on activity. It cannot understand the socio-emotional context of a student’s frustration. Its inferences about ‘cognitive stage’ are probabilistic guesses based on interaction patterns, not psychological assessments. Any tool implying otherwise is unethical. The honest signal is a tool that says: ‘We adapt our content presentation based on observed patterns. For a full developmental assessment, consult a professional.’ This honesty builds more trust than any claimed ‘AI-powered diagnosis.’

Conclusion: Building Bridges, Not Just Better Quizzes

The promise of AI in education is not to automate testing, but to scale personalized cognitive support. For K-12 learners, this personalization must be rooted in the immutable science of how children’s minds develop. A tool that asks a 9-year-old to think like a 16-year-old is not personalized; it’s neglectful. The next generation of effective EdTech will be judged not by the sophistication of its neural networks, but by the depth of its respect for Piaget’s stages. The goal is to build a bridge from a child’s current cognitive reality to the next level of understanding. That bridge must be made of the right materials, concrete examples, visual scaffolds, and tangible manipulations, for the child standing at the beginning of it. Anything else is just a wider, more frustrating chasm.

Conclusion

Ultimately, the question for any AI study tool targeting K-12 students is simple: ‘Does this respect the way children think?’ The answer must be found in the design of the questions, the spacing of the reviews, and the clarity of the dashboard, all filtered through the lens of cognitive development. When an EdTech company commits to this principle, it moves from being a quiz generator to being a true learning companion. For parents and educators, the evaluation criterion shifts from ‘Is this fun?’ to ‘Does this align with my child’s developmental stage and gently push them forward?’ That is the standard for real educational value.

Food for Thought

Think of a student you know (your child, a student, or yourself as a child). What is a concrete, tangible example from their world that could make an abstract concept you teach (or learned) suddenly make sense?

When you feel overwhelmed by learning something new, is it usually because the material feels too abstract and disconnected, or because it’s presented in a mode that doesn’t match how you naturally think?

If you were designing an AI quiz for a 9-year-old on the water cycle, what is the first, most concrete image or question you would generate? What would you avoid asking?

Consider your own use of study tools. Do you prefer concrete examples first and then abstract principles, or do you prefer to grasp the abstract theory first? Your preference likely aligns with your own cognitive development history.

Frequently Asked Questions

If my 10-year-old is ‘gifted,’ should they be using tools designed for the Formal Operational stage?

Not necessarily. Cognitive advancement is domain-specific. A child may be advanced in mathematical reasoning (formal operational) but still be concrete operational in social sciences or literature. The tool should adapt per subject area. Forcing ‘abstract’ mode in a domain where they are still concrete can create frustration. Look for tools that allow mixed-stage adaptation within a single subject.

How can I tell if an AI tool is truly developmentally adapted or just using simpler words?

Examine the question types. Does it ask for hypothetical reasoning (‘What if…?’) with younger students? Does it rely on definitions without concrete examples? A truly adapted tool will offer concrete manipulatives, image-based prompts, and questions anchored in specific, tangible scenarios for Concrete Operational learners. The adaptation is in the mode of thought required, not just the vocabulary.

What about students with learning differences like dyslexia or ADHD? Does this framework apply?

Absolutely, and it’s even more critical. Piagetian stages describe qualitative shifts in thinking. A student with dyslexia may be fully Formal Operational in reasoning but needs text-to-speech or other accessibility tools. A student with ADHD may have a typical Concrete Operational stage but needs shorter, more frequent sessions (affecting spaced repetition intervals). The cognitive stage is the ‘what’ of thinking; learning differences often affect the ‘how’ of processing. A good tool will accommodate both.

Is there a risk that labeling a child’s ‘stage’ will create a self-fulfilling prophecy or limit expectations?

Yes, which is why the tool and the adult must use this language carefully. The stage is a description of predominant mode, not a ceiling. The tool’s purpose is to provide the scaffolds that allow the child to operate just beyond their current independent stage (Vygotsky’s Zone of Proximal Development). The dashboard should highlight ‘scaffold usage’ as a positive sign of growth, not a deficit. The language should be ‘working with concrete examples’ not ‘stuck in concrete stage.’

How does this apply to language learning, which Testudy also covers?

Language acquisition has its own stages (e.g., Krashen’s monitor model, silent period). However, the cognitive demands of the content being learned in the new language still follow Piaget. Learning vocabulary for ‘kitchen objects’ (concrete) is different from learning to debate ‘philosophical idealism’ (abstract) in that language. The AI must adapt the complexity of the target concept to the learner’s cognitive stage in their native language, then present it in the new language. You wouldn’t ask a 7-year-old to debate abstract concepts in their native tongue, so you shouldn’t in a second language either.