Key Takeaways

- The carbon cost of AI-driven learning is dominated by data center energy use, not your laptop’s power cord.

- A platform’s AI model efficiency and its data center’s energy source are the two biggest determinants of its per-quiz footprint.

- Digital is not automatically greener than paper; the comparison depends on the number of uses and the full lifecycle of both options.

- True sustainability requires transparency: companies should measure and report their emissions per service unit.

- As a learner, you can reduce your footprint by using devices efficiently, extending their life, and choosing providers with verifiable green practices.

Introduction

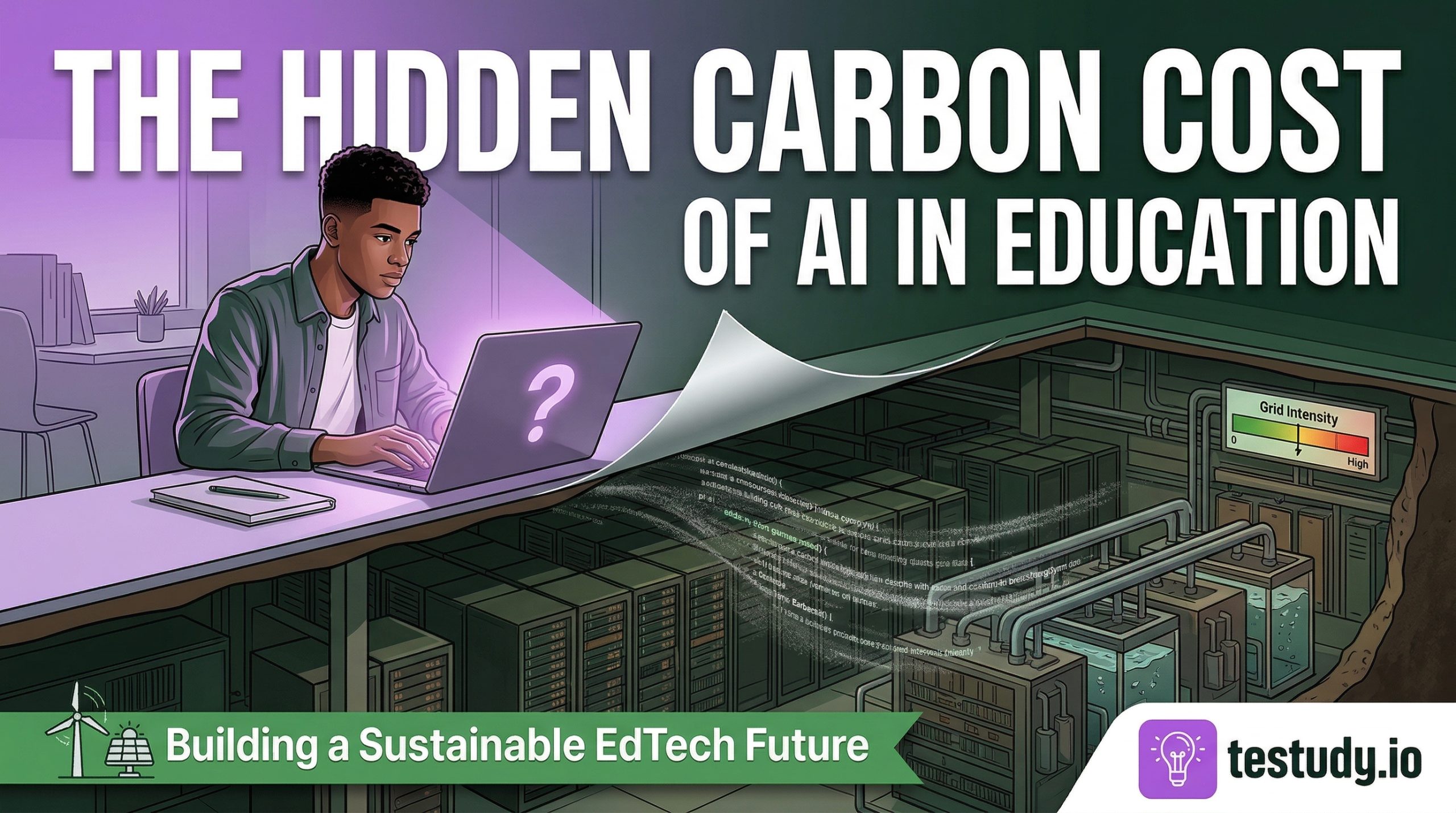

When you fire up an AI-powered study tool like Testudy to generate a quiz from your notes, the last thing on your mind is probably the environmental cost of that action. We’re conditioned to see digital as inherently clean, a disembodied, weightless alternative to paper. But behind that seamless quiz generation lies a vast physical infrastructure of data centers, network hardware, and energy grids, all consuming real electricity and generating real carbon emissions. The paradox of ‘green’ digital learning is that its environmental impact is often invisible, making it easy to ignore. This article pulls back the curtain. We’ll move beyond simplistic ‘paper vs. pixel’ debates to examine the specific carbon costs of AI in education, from the initial training of a model to the moment you hit ‘submit’ on a multiple-choice question. Our goal isn’t to induce guilt, but to foster informed clarity. Understanding where the impact truly lies is the first step toward building and choosing, genuinely sustainable learning technology.

2. Deconstructing the Footprint: AI Training, Inference, and Data Centers

To understand the carbon cost, we must separate two distinct phases of an AI model’s life: training and inference. Training is the one-time, computationally massive process of feeding data to an algorithm to create the model. For a large language model capable of comprehending your biology textbook, this can involve thousands of specialized GPUs running for weeks, emitting tons of CO2e. However, for end-users like you, training is a sunk cost. Your direct environmental interaction is with inference, the act of querying the already-trained model to generate a quiz or evaluate an answer. While a single inference is negligible (roughly the energy of a few seconds of a smartphone’s use), scale is everything. A platform serving millions of quiz attempts daily aggregates into a significant, measurable load. The critical factor is inference efficiency: how much computational work (and thus energy) is required per useful output? A model designed specifically for quiz generation, using techniques like model pruning and quantisation, can be orders of magnitude more efficient than a general-purpose chatbot repurposed for education. The second major component is the data center. This is where the physical servers reside. Their total energy draw (the Facility Total Energy) isn’t just the compute chips; it includes cooling systems (often 30-40% of total load), power delivery losses, and networking hardware. The carbon intensity of the local energy grid powering that data center is the final multiplier. A query processed on a server powered by hydroelectricity in Norway has a radically different footprint than one on a coal-heavy grid in parts of Asia. The ‘cloud’ is not a monolith; it’s a geographically distributed network with a patchwork of energy sources.

3. Beyond the Algorithm: Server Efficiency, Cooling, and the Energy Grid

Let’s zoom in on the data center. The industry measures its efficiency with PUE (Power Usage Effectiveness). A PUE of 1.0 would mean all power goes to IT equipment (servers, storage). In reality, PUEs range from 1.1 (highly efficient) to 1.8+ (older, less efficient facilities). Cooling is the biggest variable. Advanced techniques like liquid immersion cooling or using outside air for ‘free cooling’ in cold climates can drastically reduce the non-compute energy overhead. But even a PUE of 1.1 is meaningless if the electricity itself is fossil-fuel based. This is where energy sourcing becomes paramount. Leading tech companies engage in Power Purchase Agreements (PPAs) to fund and build new renewable energy capacity directly connected to their grid regions. Simply buying ‘green’ energy credits from a distant wind farm does not necessarily decarbonize the local grid where your data center operates. For an EdTech company, the responsible path is to: 1) Choose data center partners with low PUEs and transparent sustainability roadmaps. 2) Locate or contract compute in regions with greener grids. 3) Be transparent about these choices. The most sustainable server is the one that doesn’t run, which links back to software efficiency. The most efficient AI model, run on the cleanest, coolest hardware, represents the optimized ideal.

4. Digital vs. Paper: A Nuanced Lifecycle Analysis

Now for the classic debate. The intuitive appeal of ‘save trees’ is strong, but a proper Life Cycle Assessment (LCA) tells a more complex story. We must compare ‘Digital Quiz (1000 uses)’ to ‘Printed Quiz Pack (1000 sheets).’ For paper: The major impacts are in material extraction (pulp, chemicals), manufacturing (energy-intensive milling and printing), and transportation (trucking paper products). The use-phase (reading) has near-zero operational energy, and end-of-life can be recycling (beneficial) or landfill (methane emissions). For digital: The manufacturing phase is heavy—mining rare earths for devices and servers, semiconductor fabrication. The use-phase is where the ongoing impact lives: data center energy, network transmission, and your device’s electricity. The end-of-life involves complex e-waste challenges. So, which wins? It depends on usage intensity. For a document read once or twice, paper often has a lower total footprint because its manufacturing impact is amortized over so few uses, and it has zero operational energy. However, for a document accessed thousands of times (like a core study resource), the digital’s high fixed manufacturing cost is dwarfed by its near-zero marginal cost per access. A single textbook reused for a decade has a tiny annual footprint. A digital quiz generated and accessed 10,000 times spreads its initial data center and device cost extremely thin. The key variable for EdTech is durability and reuse. A platform that helps a student master a subject permanently, eliminating the need for repeated courses or re-purchasing materials, creates a profound indirect saving by avoiding future resource consumption.

5. What EdTech Companies Must Do: Transparency and Optimization

Given this complexity, what constitutes responsible operation? First, measurement. Companies must move beyond vague claims. They need to estimate their Scope 2 (purchased energy) and Scope 3 (value chain, including user device energy and data transmission) emissions using recognized frameworks like the Green Software Foundation’s methodology or the ISO 14064 standard. This requires collaboration with cloud providers (AWS, Google Cloud, Azure all have carbon footprint tools). Second, optimization. This is where product development meets climate action. It means: architecting software for computational efficiency; using smaller, specialised models instead of giant general ones; implementing intelligent caching so common quiz results don’t require recomputation; and designing features that encourage focused, efficient study sessions (the ultimate efficiency is learning well in less time). Third, sourcing. Committing to data centers with high renewable energy percentages and low PUEs. This is a business decision with a climate impact. Finally, transparency. Publishing an annual sustainability report with clear metrics (e.g., grams of CO2e per 1,000 quiz generations) builds trust and allows for industry benchmarking. It signals that the company views environmental responsibility as a core operational metric, not a marketing afterthought.

6. What Learners Can Do: Conscious Consumption of Digital Study

While systemic change is driven by companies, user behavior influences scale. Here are evidence-based, low-friction actions: 1) Close unnecessary applications and browser tabs during study. Background processes compete for CPU cycles, increasing the energy needed for your primary quiz. 2) Use dark mode on OLED screens. Pixels that are ‘off’ (black) consume no power, leading to tangible savings over long sessions. 3) Avoid high-bandwidth video within the study tool unless absolutely pedagogically necessary. Streaming video is one of the most energy-intensive web activities. 4) Study in longer, focused sessions rather than many short, scattered ones. Each app launch and network handshake has an overhead. 5) Power down or sleep your devices when not in use. The ‘always-on’ culture of devices adds up. 6) Choose platforms that are transparent about their infrastructure. If a company publishes its carbon metrics or uses certified green hosting, that’s a signal of responsible operation. 7) Extend the life of your devices. The environmental cost of manufacturing a new laptop or phone dwarfs its operational energy over years. Keeping your current device in service is one of the most impactful things you can do. These actions shift you from a passive consumer to a conscious participant in the digital ecosystem.

7. The Path Forward: Industry Standards and Certifications

The current landscape is fragmented, but standardization is emerging. The Green Software Foundation is developing certification patterns (e.g., for ‘carbon-aware’ applications that shift compute to times/places with cleaner energy). The Climate Neutral Data Centre Pact in Europe sets industry-wide targets for PUE, renewable energy, and water usage. For learners and institutions, look for platforms that reference these frameworks or hold certifications like ISO 50001 (energy management) or The Green Web Foundation’s hosting badge. These provide a baseline for comparison, moving sustainability from subjective ‘greenness’ to auditable performance. As a user, asking a provider ‘What is your PUE?’ or ‘Do you publish an annual carbon report?’ are powerful questions that drive accountability. The future of sustainable EdTech isn’t about one company’s heroics; it’s about a transparent, certified, and efficiency-obsessed industry standard where ‘going green’ is baked into the product development lifecycle.

8. Conclusion: Sustainable Learning as a Shared Responsibility

The sustainability of AI-driven education is not a simple checkbox. It is a multi-layered challenge spanning global infrastructure, corporate strategy, software engineering, and individual habit. The carbon cost of your next quiz is the sum of: the efficiency of the AI model (software), the cleanliness of the server’s power (infrastructure), and the duration of your device use (behavior). For an EdTech company like Testudy, the imperative is clear: rigorously measure impact, relentlessly optimize for efficiency, and transparently source clean energy. For you, the learner, it means using tools wisely, extending device life, and favoring providers who demonstrate operational responsibility. The ultimate irony we must resolve is this: education is about long-term human flourishing. Our tools for that education must not undermine the planetary systems that support that flourishing. By making the invisible costs visible and acting on that knowledge, we can build a learning ecosystem that is not only effective but truly sustainable.

Conclusion

Understanding the environmental footprint of AI in education moves us from passive digital consumption to active, informed participation. The goal is not to abandon technology but to demand and build better technology—technology that is computationally frugal, powered renewably, and designed for lasting mastery rather than endless engagement. True educational efficiency and environmental efficiency are deeply aligned: both seek to maximise valuable outcomes (knowledge retained, skills mastered) per unit of resource input (energy, time, material). As the sector matures, transparency will become the norm, and carbon-aware design will be a baseline expectation. Your role in this transition is to stay curious, ask the hard questions of the tools you use, and remember that the most sustainable study session is the one that helps you learn so well you never need to repeat it.

Food for Thought

Consider your most-used study material from last semester. Is it a digital file or a physical book? Based on the lifecycle principles discussed, which likely had a lower annual environmental impact, and why?

If your primary study platform published its ‘grams of CO2e per 1,000 quiz completions,’ how would that number influence your perception of its value or your usage habits?

The article emphasizes that the most sustainable study session is the one you don’t need because you mastered the material permanently. How does this reframe the goal of ‘efficient studying’ from a personal productivity lens to an environmental one?

Think about the device you use for studying. Do you know the average lifespan of its battery and its repairability score? How might that inform your next device purchase decision, balancing performance with environmental cost?

Frequently Asked Questions

Is using an AI study app actually worse for the environment than just reading a textbook?

For a single, brief session, a physical textbook you already own likely has a lower immediate footprint. However, the calculus changes dramatically with scale and reuse. An AI platform’s strength is in generating personalized, adaptive quizzes that dramatically improve retention (spaced repetition, active recall), potentially eliminating the need for re-studying the same material multiple times or purchasing additional resources. The ‘avoided impact’ of not re-taking a course or buying more books can outweigh the digital platform’s operational cost over the long term. The key is the platform’s efficiency and your own device’s usage.

How much energy does one generated quiz actually consume?

A precise number is proprietary and depends on the model’s efficiency and server location. However, using industry estimates, a single AI inference (like generating a 10-question quiz) might consume between 0.1 to 5 grams of CO2e. For perspective, this is roughly equivalent to the emissions from boiling a kettle for a few seconds to one minute. While tiny in isolation, it’s the aggregate of millions of daily quizzes that creates a significant total load, making optimization at the platform level critical.

If the data center uses renewable energy, is it ‘carbon neutral’?

It’s more accurate to say it has a much lower carbon intensity. ‘Carbon neutral’ typically requires offsetting any remaining emissions. Truly 24/7 matching of consumption to clean energy (via on-site generation or specific PPAs) is the gold standard but complex to achieve globally. A data center running on a grid with 80% renewables has an emissions factor roughly 80% lower than one on a coal-heavy grid. The location and time of energy use matter; a server using wind power at night might still rely on fossil fuels during a calm, dark evening if storage isn’t sufficient.

Does my old laptop or phone make the whole system inefficient?

Yes, device efficiency is a significant part of the user-side footprint. Older devices have less efficient processors and batteries, meaning they use more energy per computational task. An AI quiz run on a 5-year-old laptop will consume more energy than on a modern, efficiency-optimized machine. This creates a tension: the environmental cost of manufacturing a new device is very high. The most sustainable choice is usually to extend the life of your current device as long as practical, but if you are a heavy user (studying 4+ hours daily), a newer, more efficient device can eventually have a lower total footprint over its extended usable life.

Can individual actions really make a difference when data centers are so huge?

Individual actions have a marginal direct impact, but their collective power is in market signaling. When millions of users preferentially choose platforms that are transparent about sustainability and opt for efficient behaviors (like closing apps), it creates demand for greener services. More importantly, your choice of which platform to use is powerful. Supporting companies that invest in green hosting, efficient AI, and transparency directs capital toward sustainable infrastructure. The largest lever is systemic, but user preference drives the system.